Are featured snippets are driven by a specific ranking algorithm that is separate from the core algorithm?

That’s my theory. For me, the idea holds (a lot) of water.

And I’m not alone. Experts such as Eric Enge, Cindy Krum, and Hannah Thorpe have the same idea.

To try to get confirmation or a rebuttal of that theory, I asked Gary Illyes this question:

Does the Featured Snippet function on a different algorithm than the 10 blue links?

The answer absolutely floored me.

He gave me an overview of what a new search engineer learns when they start working at Google.

Please remember that the system described in this article is confirmed to be true, but that some conclusions I draw are not (in italics), and that all the numbers here are completely invented by me.

The aim of this article is to give an overview of how ranking functions. Not what the individual ranking factors are, nor their relative weighting / importance, nor the inner workings of the multi-candidate bidding system. Those remain a super-secret (I 100% see why that is the case).

How Ranking Works in Google Search

What Are the Ranking Factors?

There are hundreds/thousands of ranking factors. Google doesn’t tell us what they are in detail (which, by the by, seems to me to be reasonable).

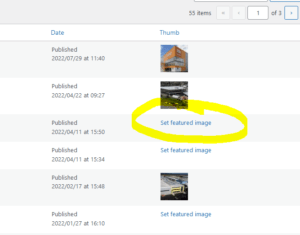

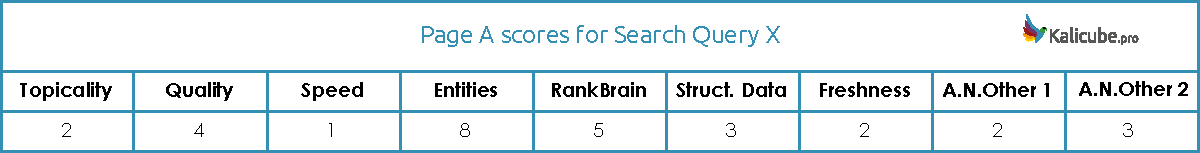

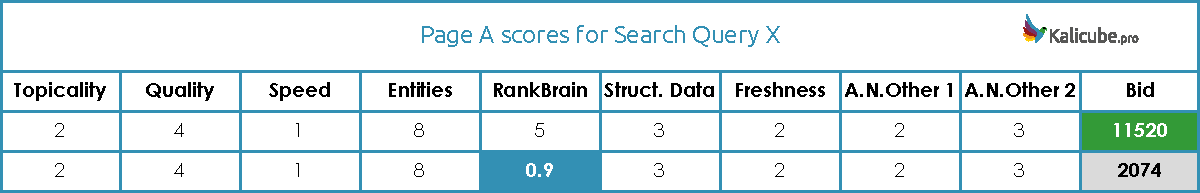

They do tell us that they group them: Topicality, Quality, PageSpeed, RankBrain, Entities, Structured Data, Freshness… and others.

A couple of things to point here:

- Those seven are real ranking factors we can count on (in no particular order).

- Each ranking factor includes multiple signals, for example Quality is mostly PageRank but also includes other signals and Structured Data includes not only Schema.org but also tables, lists, semantic HTML5 and certainly a few others.

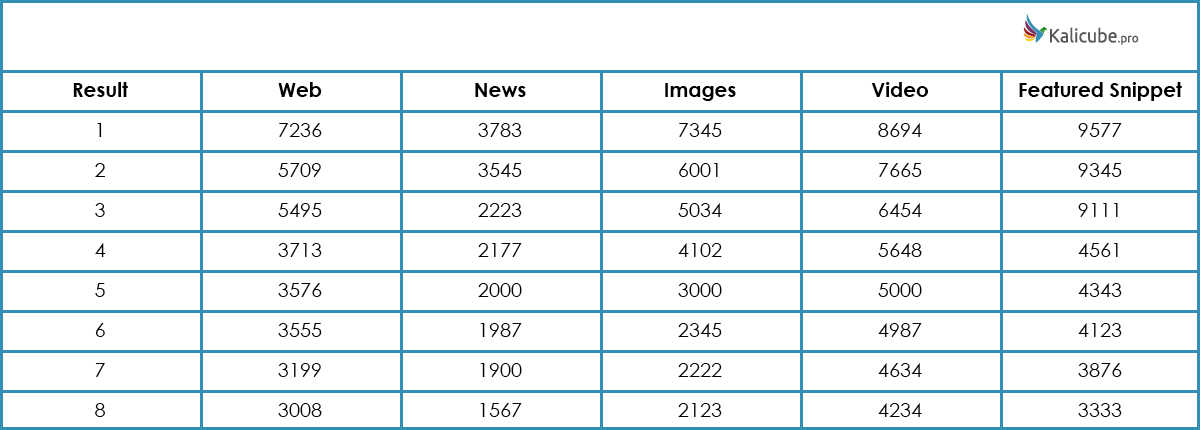

Google calculates a score for a page for each of the ranking factors.

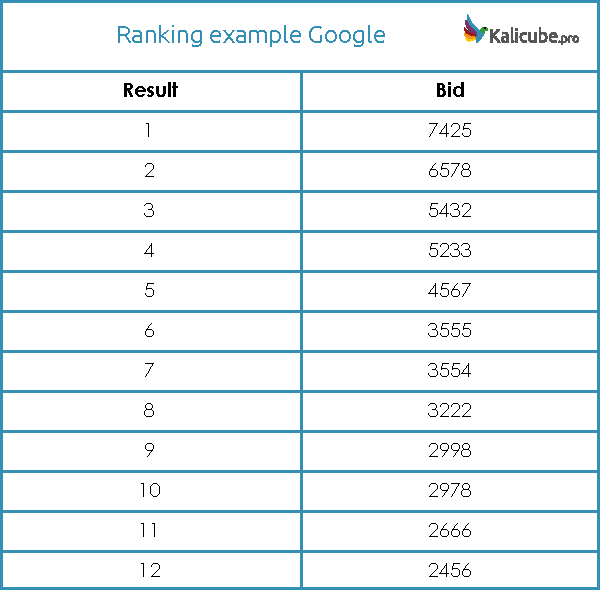

Something like this

Something like this

Remember that throughout this article, all these numbers are completely hypothetical.

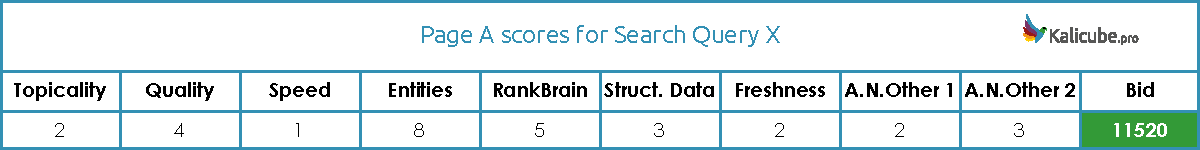

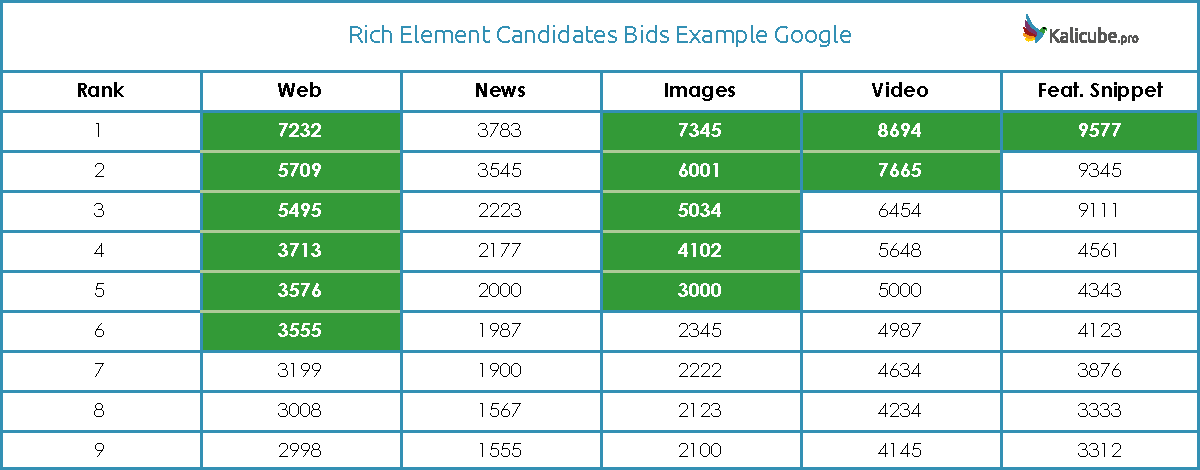

How Ranking Factors Contribute to the Bid

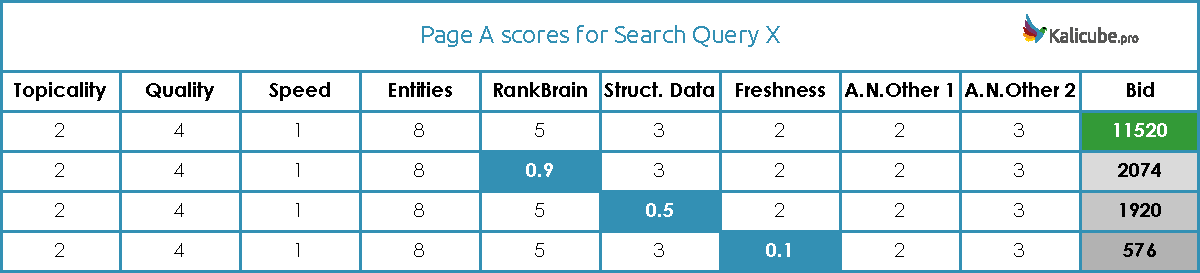

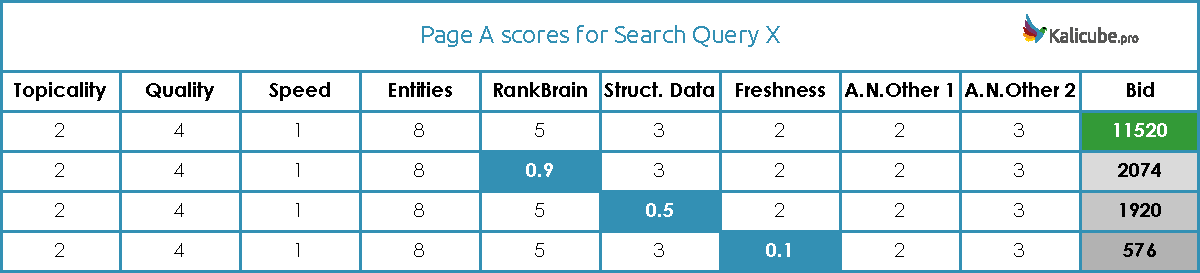

Google takes the individual ranking factor scores and combines them to calculate the total score (the term ‘bid’ is used, which makes super good sense to me).

Importantly, the total bid is calculated by multiplying these scores.

Something like this

Something like this

The total score has an upper limit of 2 to the power of 64… not 100% sure, but I think that is what Illyes said, so perhaps it is a reference to the Wheat and Chessboard problem where the numbers on the second half of the chessboard are so phenomenally off-the-scale that it is effectively a kind of fail-safe buffer).

That means these individual scores could be single, double, triple, or even quadruple digits and the total would never hit that upper limit.

That very high ceiling also means that Google can continue to throw in more factors and never have a need to “dampen” the existing scores to make space for the new one.

Just up to there, my mind was already swirling. But it gets better.

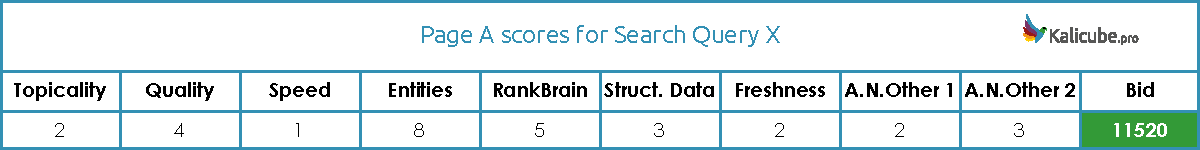

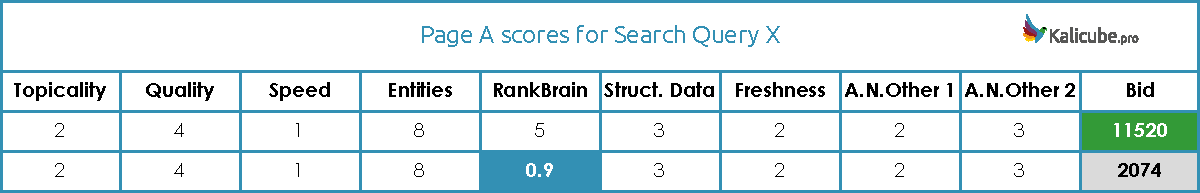

Watch out – One Single Low Score Can Kill a Bid

And the fact that the total is calculated by multiplication is a phenomenal insight. Why? Because any single score under 1 will seriously handicap that bid, whatever the other scores are.

Look at how the score tanks as just one factor drops slightly below 1. That is enough to put this page out of contention.

Dropping further below 1 will generally kill it off. It is possible to overcome a sub-1 ranking factor. But the other factors would need to be phenomenally strong.

Looking at the numbers below, one gets an idea of just how strong. Ignoring a weak factor is not a good strategy. Working to get that factor above 1 is a great strategy.

My bet here is that the super impressive ‘up and to the right SEO wins’ examples we (often) see in the SEO industry are examples of when a site *simply* corrects a sub-1 ranking factor.

This system rewards pages that have good scores across the board. Pages that perform well on some factors, but badly on others will always struggle. A balanced approach wins.

Credit to Brent D Payne for making this great analogy: “Better to be a straight C student than 3 As and an F”.

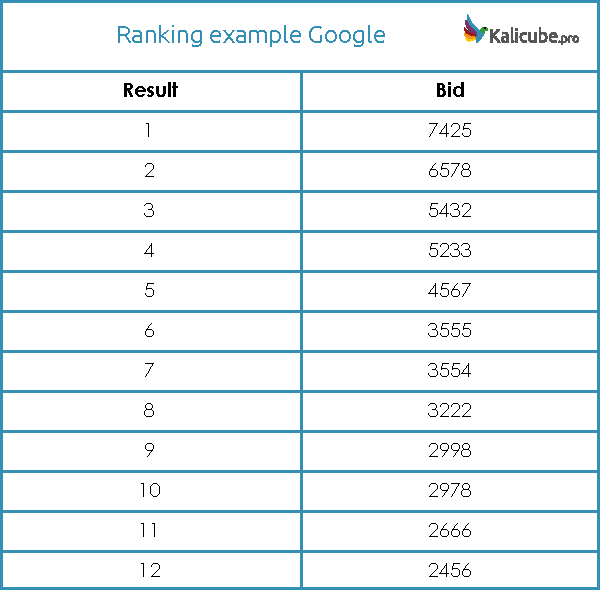

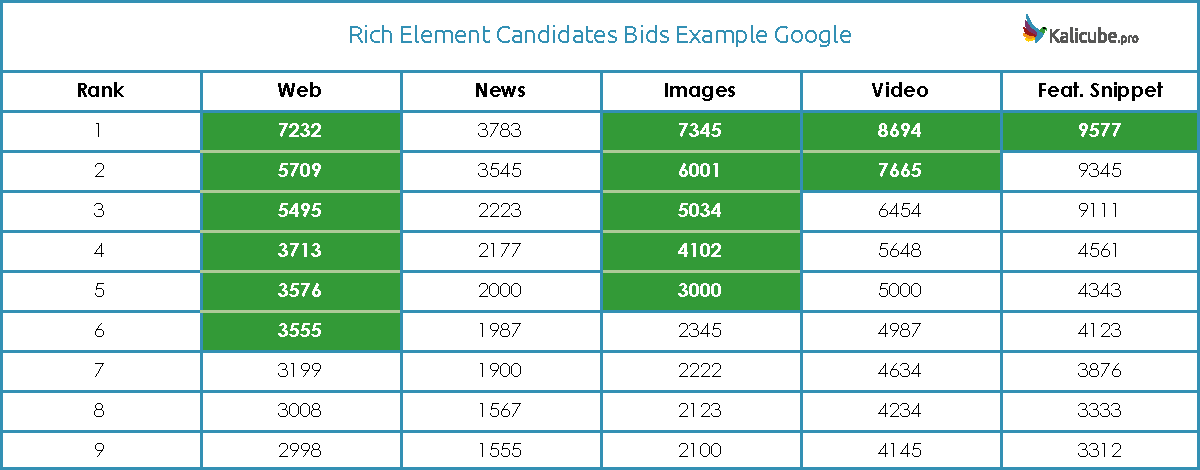

What a Bid-Based Ranking Looks like

Tis is just an example

Tis is just an example

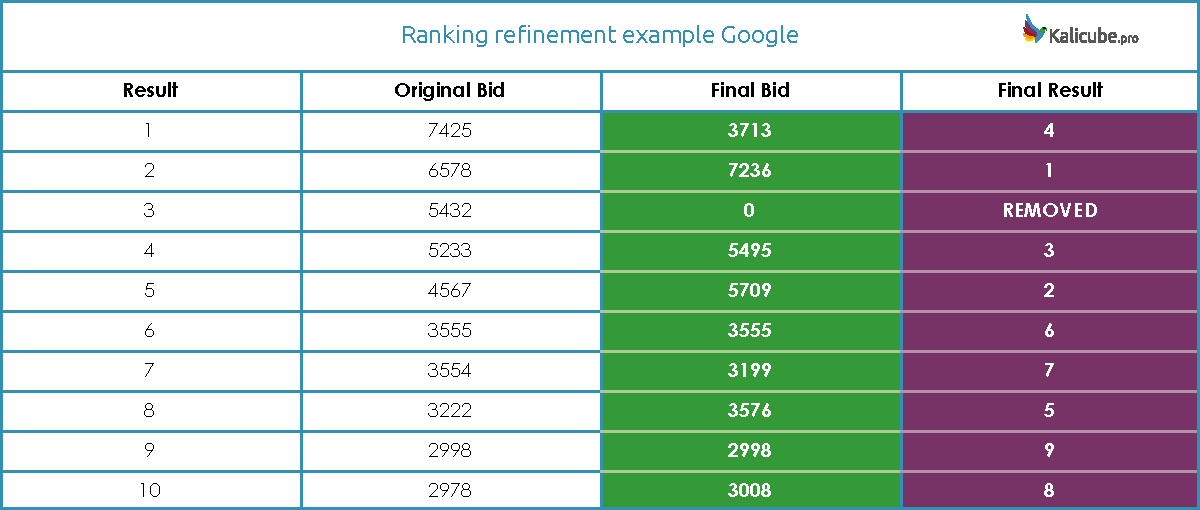

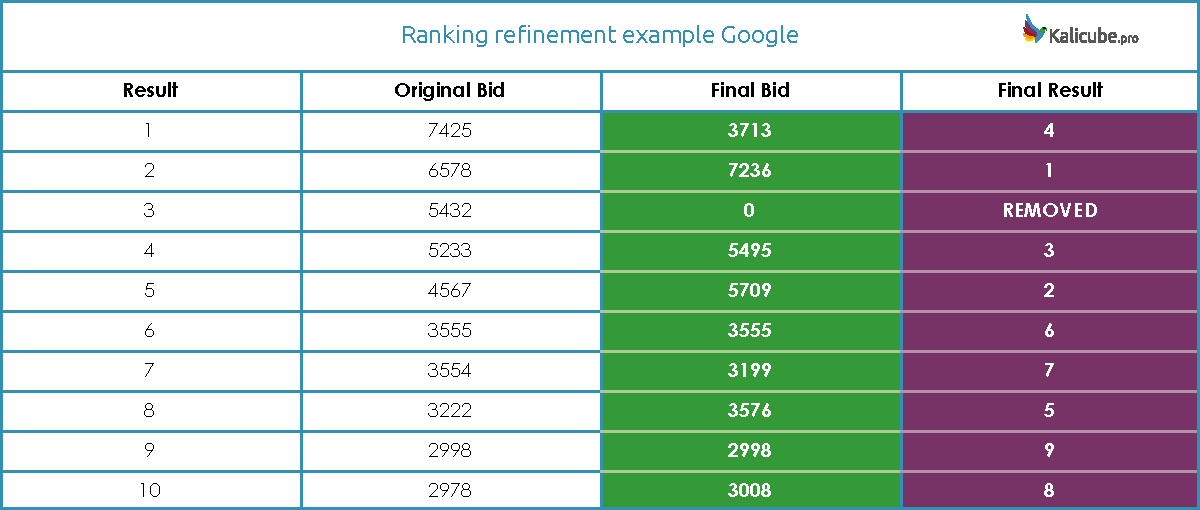

Refining the Bids for a Final Ranking

The top results (let’s say 10) are sent to a second algorithm that is designed to refine the ranking and remove any unacceptable results that slipped through the net.

The factors taken into account here are different and appear to be aimed at specific cases.

This recalculation can raise or lower a bid (or conceivably leave it the same).

My understanding is that it is most likely to push a bid down. I’ll take that further and suggest that this is a filter currently aimed principally at blocking irrelevant, low quality and black hat content that the initial algorithm missed.

So we are looking at a final set of bids that might look something like this.

Note that in this example, one result gets one zero score and is therefore completely removed from consideration / eliminated (remember, because we are multiplying, any individual zero score will guarantee that the overall score is also zero). And that is seriously radical. And a very significant fact, however you look at it.

Such a zero can be generated algorithmically.

My guess is that a zero could additionally serve as a way to implement some manual actions (this is a pretty big jump from what I was told, and is my conclusion and has in no way be confirmed by anyone at Google).

What is sure is that the order changes and we have a final list of results for the web / “10 blue links.”

If that weren’t enough for one day, now it gets really interesting.

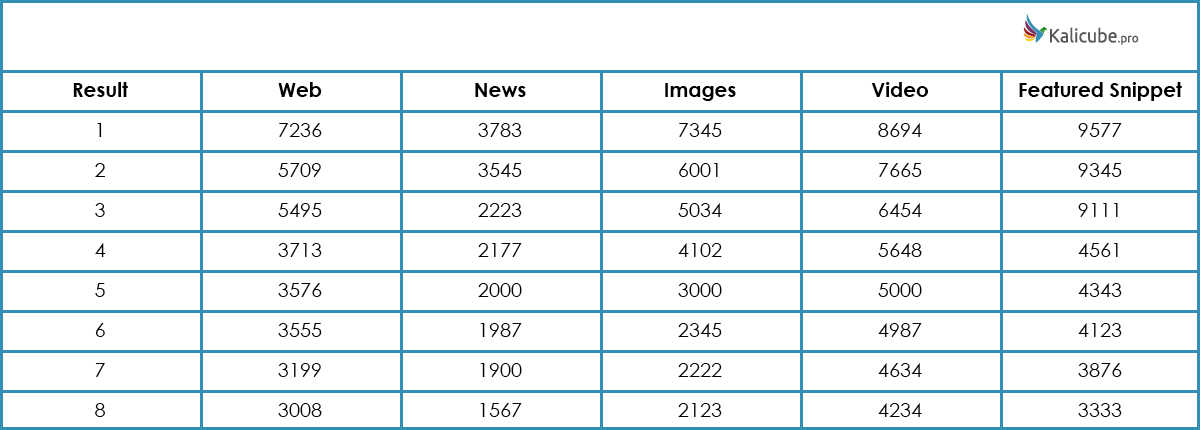

Rich Elements Are ‘Candidate Result Sets’ (My Term, Not Google’s)

Candidate Result Sets Compete for a Place on Page 1

Each type of result/rich element is effectively competing for a place on page 1.

News, images, videos, featured snippets, carousels, maps, GMB, etc. – each one provides a list of candidates for Page 1 with their bids.

There is already quite a variety competing to appear on Page 1, and that list keeps on growing.

With this system, there is no theoretical limit to the number of rich elements that Google can create to bid for a place.

With this system, there is no theoretical limit to the number of rich elements that Google can create to bid for a place.

Candidate Result Ranking Factors

The terms ‘Candidate Result’ and ‘Candidate Result Set’ are from me, not from Google

The combination of factors that affect ranking in these candidate result sets is necessarily specific to each since some factors will be unique to an individual candidate result set and some will not apply.

An example would be alt tags that apply to the Images candidate result set, but not to others, or a news sitemap that would be necessary for the News candidate result set, but have no place in a calculation for the others.

Candidate Result Set Ranking Factor Weightings

The relative weighing of each factor will also necessarily be different for each candidate result set since each one provides a specific type of information in a specific format.

And the aim is to provide the most appropriate elements to the user in terms:

- The content itself.

- The media format.

- The place on the page.

For example, freshness is going to be a heavily weighted factor in News, and perhaps RankBrain for Featured Snippets.

Candidate Result Set Bid Calculations

The bids provided by each candidate result set are calculated in the same way as the first Web/blue links example (by multiplication and, I assume, with the second refinement algorithm).

Google then has multiple candidates bidding for a place (or several places, depending on the type).

Pulling It All Together for Page 1

Candidate Result Sets Bid Against Each Other

My initial question was about the Featured Snippet, and I am certain that the top bid from that specific candidate result set had to outbid the top result for the Web to “win.”

For the others, that doesn’t make 100% sense. So I am assuming the rules to “win” are different for each candidate result set.

The rules I used to make these winning choices are fictional, and not how Google really does this.

The rules I used to make these winning choices are fictional, and not how Google really does this.

Google is looking for any rich result that will provide a “better” solution for the user.

When it does identify a “better” candidate result, that result is given a place (at the expense of one or more classic blue links).

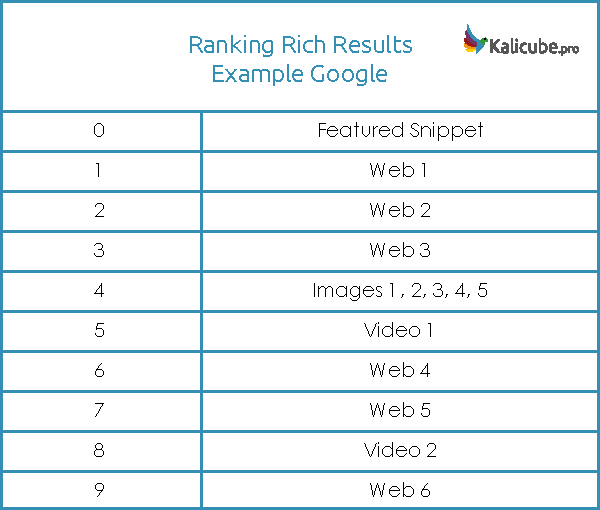

The Final Choice of Rich Elements on Page 1

Each candidate result set is subject to specific limitations – and all are subservient to that traditional web result/classic blue links (for the moment, at least).

- One result, one possible position (featured snippet, news, for example)

- Multiple results, multiple possible positions (images, videos, for example)

- Multiple results, one possible position (news, carousel, for example)

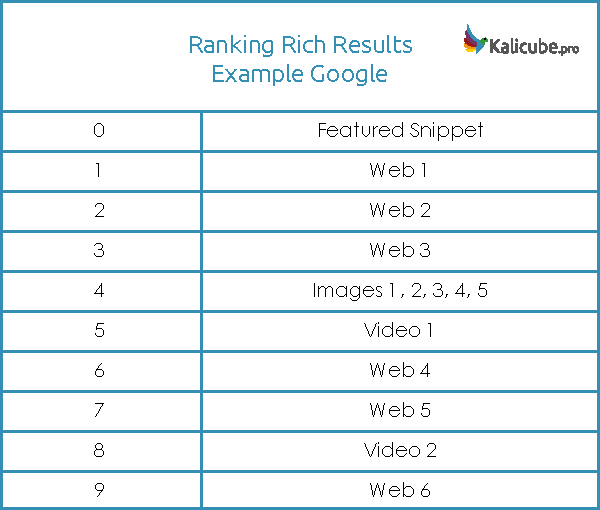

And the winners in my example are (remember that the rules I used to make these choices are fictional, and not how Google really does this)…

- News: Failed to outbid the #1 Web bid and is therefore not sufficiently relevant and does not win a place.

- Images: We have one winner. The space allotted is 5 so the other 4 get a free ride.

- Video: Two are outbidding the top web result so they both get a place.

- Featured Snippet: We have several winners. But only one is used. Because this is “the” answer.

We have our final page and it looks something like this.

We have our final page and it looks something like this.

As places are given to rich elements, the lower positioned web results drop onto Page 2. Which rather hammers home that we really should not take our eyes off the ongoing demise of blue links.

I reiterate: I have no information about how positions are attributed to the videos or images – I attributed positions to them with my own invented simplistic system, not Google’s. 🙂

To End – A Little Theorizing From Me

All of this last chunk is my initial thoughts as I digest all this. Not attributable to Gary or Google.

Darwinism in Search Results

It seems to me that some rich elements will “naturally” grow and win a place on Page 1 increasingly often (featured snippet being an example that we are seeing in action today).

Others will “naturally” shrink (classic blue links on mobile). And some could “naturally” die out entirely. All very Darwinian!

This System Isn’t Going Away Any Time Soon

Google’s “rich element ranking” system has an in-built capacity to expand and adapt to changes in result/answer delivery. Organically!

New devices, new content formats, personalization… Google can simply create a new rich element, add it to the system and let it bid for a place. It will win a place in results when it is a more appropriate option than the classic blue links. Potentially, over time it will naturally dominate in the micro-moment it is most suited to.

Darwinism in Search. Wow!

Don’t know about you, but all in all, my mind is blown.

More Resources:

Image Credits

Featured Image & In-Post Images: Created by Véronique Barnard, May 2019